Cleaning up the cloud: decarbonizing the world’s data centers

This article was previously published in our newsletter, if you're not already a subscriber, sign up here.

The cloud is too small and too slow! The worldwide network of interconnected servers that we call the ‘cloud’ already processes and stores huge amounts of data today - 100+ billion terabytes in 2023 by some estimates. It runs applications and delivers a wide range of content, including streaming videos, web mail and social media.

Tomorrow, it will have to do much, much more: from supporting millions of autonomous vehicles to handling remote medical operations, as well as powering artificial intelligence (AI) applications that are still being invented. To do so, it will need to increase its data processing and storage capacity by several orders of magnitude and operate with greater speed to avoid latency (delay).

This is driving a boom in the construction of data centers, the physical buildings that will house all those new servers. The global data center market was worth over $260 billion in 2022 and is expected to grow by 11% a year to reach $600 billion by the end of this decade, according to consultancy P&S Intelligence.

The issue is that data centers consume a lot of power, not only to run the servers themselves but also to cool them. Up to 30% of the power consumption of a large data center is utilized in cooling. Currently, data centers are already responsible for around 0.6% of global greenhouse gas emissions and 1% of electricity use, notes the International Energy Agency.

That figure is set to grow exponentially: the power usage of a standard processing unit, or chip, inside a server is around 75-200 watts, similar to a light bulb. But one of Nvidia’s GPU chips essential to AI applications, needs 5-10 times as much power, and will generate 5-10 times as much heat. This is expected to drive the energy consumption of a typical hyperscale data center from 30-40 Megawatts currently to over 100MW in three to five years’ time. Several facilities of this size, and greater, are already under construction.

Cooling down

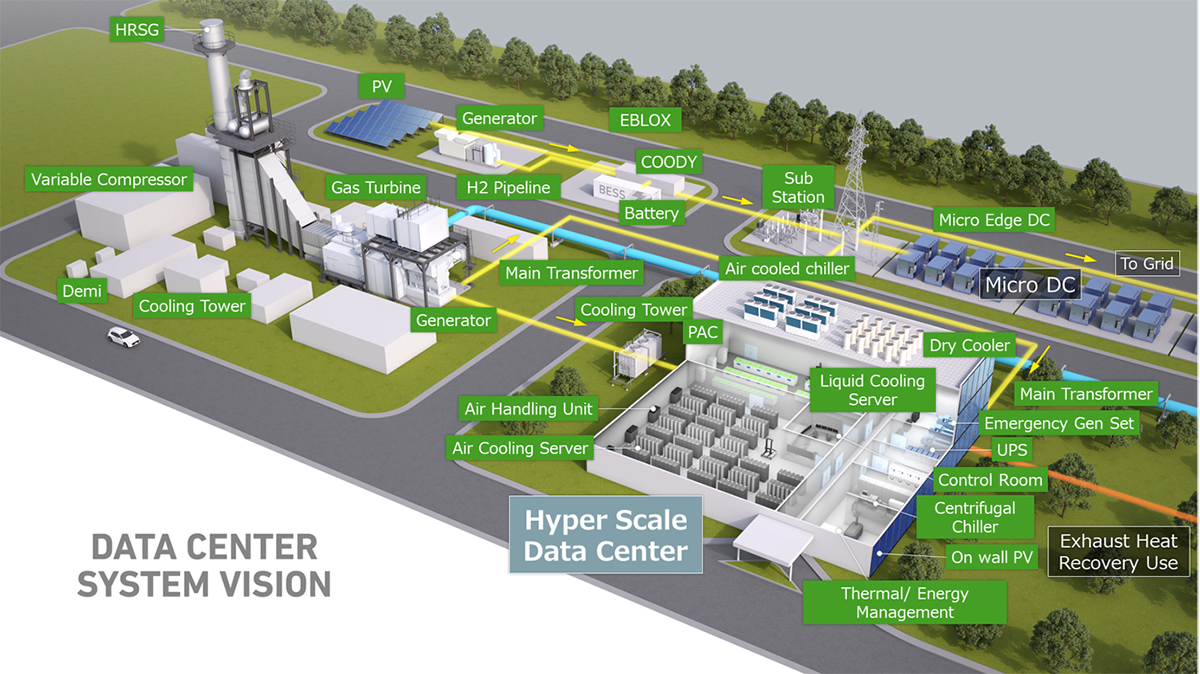

Helping data centers to become more energy efficient and to decarbonize their residual power requirements is, therefore, both a rapidly growing need and an opportunity. It is one that Mitsubishi Heavy Industries (MHI) Group is perfectly placed to seize, given our long heritage not only in power generation equipment but also in cooling technology, in configuring and running control systems and in the engineering and construction expertise that brings all this together.

Starting with energy conservation, today’s server rooms are generally air cooled via either chillers or coil-based air handling units, which use a lot of electricity. Installing the latest centrifugal chillers can reduce that; but the improvement is limited to the heat transfer capabilities of the air itself and the new AI chips simply run too hot for air cooling to be effective.

Next generation cooling systems will therefore use liquid coolants, such as a specially formulated oil in innovative ways. The first type is immersion cooling, where a number of servers are literally lowered into a container filled with the coolant – we call it the ‘bathtub’. MHI has developed this solution and we are currently testing it at a data center owned by KDDI, one of Japan’s big telcos, achieving a 94% reduction in energy consumption this spring.

The drawback of immersion cooling is that servicing and switching out servers is time consuming and cumbersome as they need to be lifted out of the container, drained and cleaned. An alternative is direct-to-chip cooling, where a metal ‘cold plate’ is installed directly above the processor inside the server and a coolant is then piped over the plate, drawing out the heat. Not only can this heat be recycled, but the use of dielectric oils also mitigates the risk of refrigerant leakage, and it requires only minimal modifications of the server room. In September, MHI signed a strategic alliance agreement with ZutaCore, a start-up that is pioneering direct-to-chip cooling. This alliance allows MHI to sell its technology under our brand as part of our wholistic solutions offerings to our Data Center customers.

Powering up

Depending on its server mix, a data center can utilize one or more of these cooling techniques to reduce energy consumption. But it will still have to decarbonize whatever electricity it does require. Smaller data centers or those in remote locations can consider a co-generation system like MHI’s EBLOX that combines renewable energy, battery storage and an emergency generator.

Medium-sized facilities can use gas engines that will in future run on hydrogen. And hyperscale data centers will be big enough to justify a dedicated gas turbine, with MHI, among others, able to supply turbines that can co-fire hydrogen today and will soon be 100% powered by this zero-carbon fuel.

Having achieved sustainability for cooling and power, the next step in optimizing a modern data center is to combine the software that controls its ‘white space’ (the Computer Room) with the software controlling its ‘grey space’ (the power and cooling equipment) into an integrated control system. In July, MHI teamed up with Germany’s FNT to provide exactly this kind of integrated system, adding our experience in energy management technologies with their knowledge of IT infrastructure.

Building a business

Cutting edge products and the ability to offer a one-stop solution for all the utility needs of a data center are attractive. But even then, customers want their supplier to have ‘boots on the ground’ – a robust service network – since equipment always develops problems. I learned this during my time marketing power systems in the US and UK and developing MHI’s long-term service agreements; and is one reason, I believe, why we have achieved global market leadership in gas turbines.

This is also the rationale behind MHI’s recent acquisition of Concentric, a US company that provides data centers, utilities and electric forklifts with power solutions. With almost 1,000 staff across 58 locations, Concentric gives us an immediate nationwide sales and service network that can, over time, be expanded internationally.

Putting all of these pieces together in order to build a new business is challenging and despite an extensive range of in-house technologies is not something we could have done without the help of the external partners I have mentioned.

Meanwhile, we are already working on a new initiative, so-called Edge Data Centers: a set of high-performance (high-heat) servers in a 12-foot container that can be trucked to any location. As bigger data centers focus increasingly on processing and storing “Big Data”, these Edge DCs can be easily scaled up to handle on-site rapid processing tasks and, eventually, to decentralize the cloud itself. This is not an industry that will ever stand still.

For inquiries related to Data Center decarbonization contact us: [email protected]

Watch out our video about Immersion cooling data center